INTRODUCTION

The Growing Threat of Web Scraping – and What It Costs Businesses

Web scraping has become one of the most pervasive – and underreported – cybersecurity

threats facing online businesses today. According to Imperva’s 2023 Bad Bot Report,

automated bots account for nearly 47.4% of all internet traffic, and of that, bad bots – those

designed to scrape, abuse, or attack – represent 30.2% of total web traffic globally. For

industries that rely on real-time pricing, inventory, and listings data, the stakes are especially

high.

The hospitality and travel sector is among the hardest hit. Research by Distil Networks (now

Imperva) found that travel and hospitality websites see some of the highest bot traffic rates

across all industries, with scraping bots often making up 20-40% of site visits. These bots don’t

just steal data – they consume server bandwidth, inflate infrastructure costs, distort analytics,

and in high-frequency cases, cause website downtime that directly impacts revenue.

The financial impact is staggering. Gartner estimates that online businesses lose billions

annually to data theft via scraping, with the cost compounded by brand damage, loss of

competitive pricing advantage, and customer drop-off during outage periods. For a platform

whose core value lies in its curated listing data, a single sustained scraping attack can expose

months of strategic pricing work to competitors within hours.

Modern scraping bots have grown far more sophisticated than the simple crawlers of a decade

ago. Today’s scrapers rotate IP addresses, mimic human browsing behavior, solve CAPTCHAs,

and are often specifically engineered to evade standard Web Application Firewalls (WAFs) and

cloud-based DDoS protection tools. This makes them nearly impossible to stop with off-the-shelf

security products alone – and increasingly, businesses are discovering that a custom, behavior

driven approach is the only effective countermeasure.

The Problem No Standard Tool Could Catch

The client is one of India’s leading luxury villa rental platforms, offering curated properties

across premium destinations. Like most fast-growing hospitality businesses, their website holds

their most valuable asset – property listings, pricing, and availability data that drives bookings

and revenue.

When a scraping bot started hitting their site repeatedly, the impact was immediate: their

website kept crashing. Worse, the attacker had built the bot to change its IP address on every

single request, making standard blocking methods useless.

The client reached out to Operisoft Technologies. Within 24 hours, we had a working solution

live on their server – and the attacks stopped.

THE PROBLEM

A Bot That Kept Changing Its Identity

The scraping bot was not your typical automated crawler. It was specifically built to avoid

detection:

- It sent a high volume of requests in rapid succession, putting enough load on the server to cause repeated crashes

- Every request came from a different IP address, so blocking by IP had no lasting effect

- The scraper was quietly copying the client’s listing data – pricing, property details, availability – giving competitors a free view into their business

- Standard cloud-based firewall tools could not flag the traffic because the bot’s behavior did not match typical attack signatures

- Real customers were being affected – the site was slow or unavailable during crash periods, directly hurting bookings

The bot’s IP kept changing with every request. No static blocklist could keep up. The only way to stop it

was to look at how it behaved, not where it was coming from.

OUR SOLUTION

A Custom Detection Agent – Built for This Exact Attack

We did not try to fit this problem into an existing tool. We built something specifically for this

client’s situation.

Our team wrote a lightweight monitoring script that ran directly on their server, watching live web

traffic in real time. Instead of looking at IP addresses, it studied request patterns – how fast

requests came in, which pages were being hit, how the traffic behaved over time.

When the script detected the scraper’s pattern, it blocked that IP immediately. When the bot

switched to a new IP and resumed, the pattern showed up again – and it was blocked again.

The script adapted continuously, staying ahead of the attacker without needing any manual intervention.

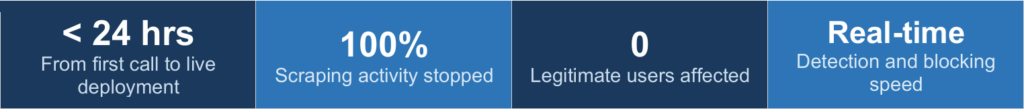

- Built and deployed entirely within 24 hours of the initial call

- Ran directly on the client’s server with no third-party dependency

- Detected the scraper by its traffic behavior, not its IP address

- Blocked malicious requests in real time, automatically

- Had zero impact on normal visitors browsing the site

KEY OUTCOMES

The Crashes Stopped. The Data Was Secure.

From the moment the script went live, the attack lost its effectiveness. The bot kept trying, kept

switching IPs – and kept getting blocked. The client’s site came back to full stability, and their

listing data stopped being scraped.

There was no disruption to real users throughout the process. The fix worked silently in the

background, exactly as intended.

- Website crashes stopped completely after deployment

- Scraping of listing data was cut off

- Site returned to full availability for real customers

- No legitimate user traffic was blocked or affected

- No ongoing manual work needed – the script ran autonomously

KEY METRICS

By the Numbers

BUSINESS ENVIRONMENT

Why This Kind of Attack Happens

In the online travel and hospitality space, data is the product. A platform’s listings, pricing

strategy, and availability windows represent months of curation and business decisions. That

data has real value to competitors.

Scraping attacks in this industry are not random – they are usually deliberate. Someone wants to

know what you are charging, what properties you have, and how your availability looks. With

that information, they can undercut your pricing, copy your catalog, or simply monitor your

business moves.

For a platform like this one – growing quickly and building its brand around unique villa

experiences – protecting that data is not just a technical concern. It is a business one.

This case also shows something worth noting: highly adaptive bots can slip past conventional

firewall rules. When an attacker builds their tool specifically to avoid standard detection

methods, the response has to go deeper than a ruleset. It requires understanding the traffic and

writing logic tailored to what you are actually seeing.

CONCLUSION

Sometimes the Right Solution Has to Be Built From Scratch

This client’s situation was straightforward in one sense – they had a bot problem that was

crashing their site. But solving it required more than switching on a setting or updating a firewall

rule.

We looked at the traffic, understood the pattern, and built a script that addressed the actual

attack. It was ready in under a day, it worked immediately, and it kept working as the attacker

tried different approaches.

That is what Operisoft does: we look at the real problem, not the assumed one, and we build

what the situation actually needs.

We did not try to fit this into an existing product. We looked at exactly what was happening and built the

fix for it.